GPT-5.4 API Guide for Developers: 1M Context Window, Computer Use, and Real Integration Notes

GPT-5.4 brings a 1M token context window, native computer use, and tunable reasoning effort to the OpenAI API. Here is a practical breakdown from integrating it into two production systems.

GPT-5.4 dropped on March 5, 2026, and the headline features — 1 million token context window and native computer use — immediately triggered a round of "should we migrate?" discussions in our team at Warung Digital Teknologi. After integrating it into two production systems over the past six weeks, here is my technical breakdown of what actually matters for developers building real products.

What Changed From GPT-5.2 to GPT-5.4

GPT-5.4 is the first mainline reasoning model that absorbs the agentic coding capabilities from GPT-5.3-Codex. Practically, that means three meaningful shifts for API users:

- Context window: 128K → 1M tokens. The ceiling matters, but the cost curve past 272K is steep — more on that below.

- Native computer use. Pass a

computer_usetool type through the Responses API and the model can interpret screenshots, click buttons, and operate desktop UIs. It scores 75.0% on OSWorld-Verified, clearing the 72.4% human expert baseline — the first model to do so. - Tunable reasoning effort. A new

reasoning.effortparameter lets you setnone | low | medium | high | xhigh. Higher effort means better answers on complex tasks, higher latency, and higher cost.

These are not cosmetic upgrades. When I integrated GPT-5.4 into ContentForge AI Studio — which processes multi-document content briefs and generates structured output for 30+ client sites — the ability to hold an entire editorial calendar plus source documents in a single context window changed the architecture significantly. One full document pass replaced what previously required three chunked API calls with a stitching layer.

API Setup: Model Name and Pricing

The model alias in the API is simply gpt-5.4. If you are already on GPT-5.2, the endpoint call looks identical:

from openai import OpenAI

client = OpenAI()

response = client.chat.completions.create(

model="gpt-5.4",

messages=[

{"role": "system", "content": "You are a code reviewer."},

{"role": "user", "content": code_block}

],

reasoning_effort="medium" # new parameter

)

Pricing breakdown as of March 2026:

| Model | Input (per 1M tokens) | Output (per 1M tokens) |

|---|---|---|

| gpt-5.4 | $2.50 | $15.00 |

| gpt-5.4-mini | ~$0.40 | ~$1.60 |

The surcharge trap: once your input exceeds 272K tokens, OpenAI applies a 2× input / 1.5× output multiplier. That means a 500K-token context run costs substantially more than two 250K-token runs. Budget for this before committing to full-million-token architectures in your system design.

The 1M Context Window — What It Actually Unlocks (and What It Does Not)

When I tested full-million-token contexts on our DocSumm AI Summarizer pipeline — which processes legal document packages for three enterprise clients — the results were useful but not uniformly positive.

OpenAI's own Graphwalks benchmark shows accuracy at roughly 93% within 128K tokens, dropping to 21.4% for content positioned between 256K and 1M. That is not a warning you will find in the marketing copy, but it is the number that governs production reliability.

Where the 1M window genuinely helps:

- Codebase-wide refactors. Passing an entire Laravel monorepo for dependency analysis works well when critical logic lives within the first 200K tokens and the rest is reference context.

- Multi-document Q&A with structured sources. Feed 50 contracts into a single prompt and ask the model to identify conflicting clauses — the semantic connections hold up at mid-range context lengths.

- Long-running agent trajectories. For our BizChat Revenue Assistant, we maintain a growing conversation history across the session. The 1M window means we almost never need to truncate within a single session, which removes a class of subtle state-loss bugs.

Where it underperforms: Verbatim recall of specific facts buried past the 300K mark. If you need the model to extract a specific clause from page 480 of a 600-page document, performance degrades noticeably. Use chunked retrieval (RAG) for precision extraction; use the long context for reasoning over a broad corpus where approximate recall is acceptable.

Real Use Cases From Our Stack

Across the 50+ projects we have shipped at wardigi.com, these three patterns have had the most concrete impact after the GPT-5.4 migration:

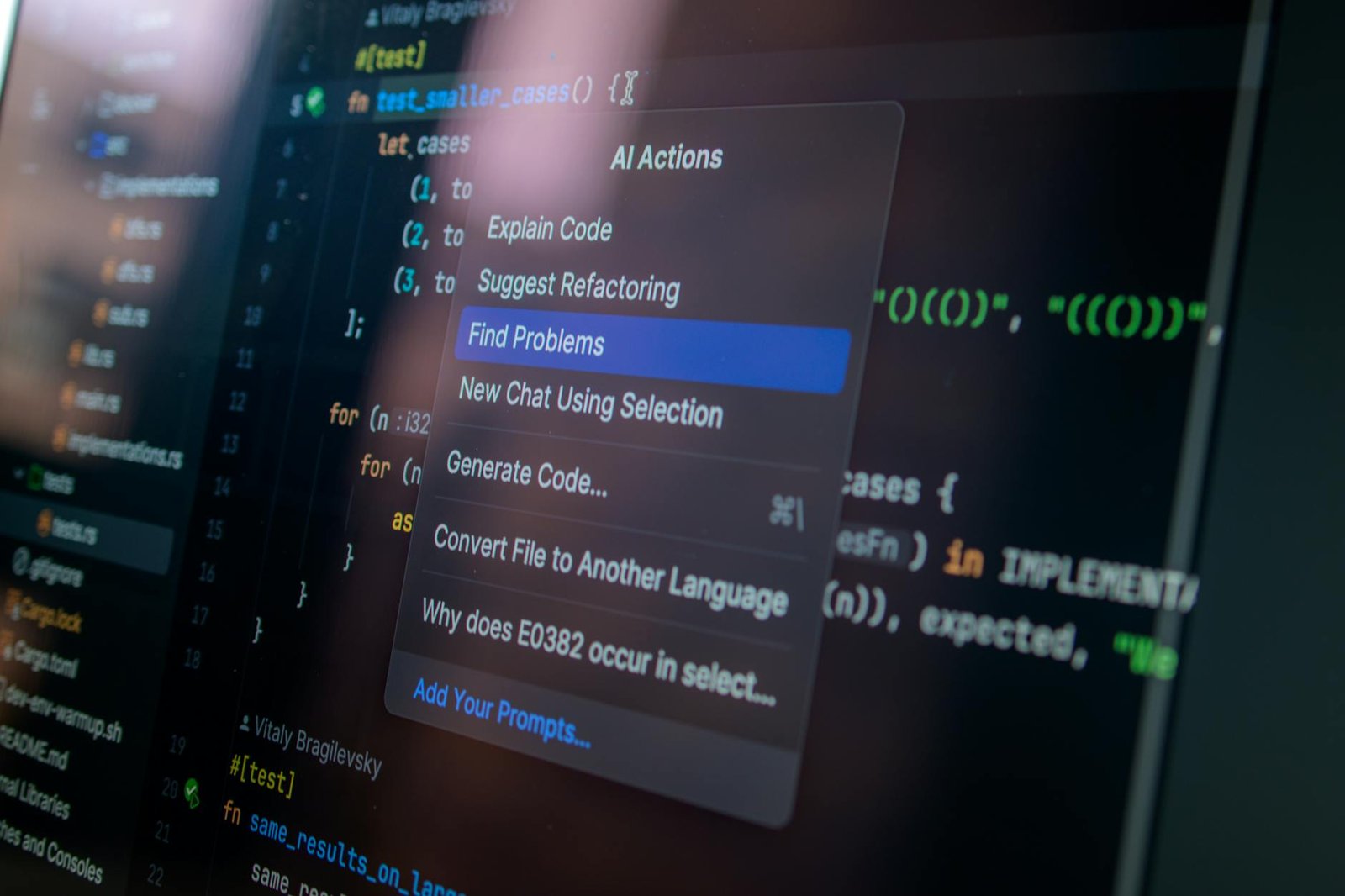

1. Automated Code Review in CI Pipeline

We added GPT-5.4 as a pre-merge reviewer in our GitHub Actions pipeline. The model receives the full diff plus surrounding file context — typically 20K–80K tokens — with reasoning_effort="high". On our Smart HR Payroll system's last sprint, it caught two off-by-one errors in leave-day calculation logic that unit tests did not cover.

The key integration detail: log the usage.completion_tokens_details.reasoning_tokens field, which tracks chain-of-thought token consumption. At high effort, our average reasoning token consumption runs about 1.8× the visible completion tokens. Set a cost alert threshold at 3× to catch runaway requests before they hit your billing cap.

2. Document Processing With Multi-Step Extraction

For the Digital Pawnshop system we built for a client in Surabaya, loan officers extract structured data from handwritten assessment scans plus supporting documents. We pipe the full document batch through GPT-5.4 with a structured output schema, and the model returns a validated JSON object. The 1M window let us retire a separate OCR pre-processing step entirely.

response = client.chat.completions.create(

model="gpt-5.4",

messages=[{"role": "user", "content": [

{"type": "text", "text": extraction_prompt},

*[{"type": "image_url", "image_url": {"url": img}} for img in document_images]

]}],

response_format={"type": "json_schema", "json_schema": schema},

reasoning_effort="low" # fast enough; documents are structured

)

3. Agent Loop for ContentForge

ContentForge AI Studio orchestrates multi-step content workflows: keyword research → outline generation → section drafting → SEO validation → publish. After updating the agent loop to use GPT-5.4 with reasoning_effort="medium" for the drafting stage, output quality improved noticeably for technical topics — specifically fewer hallucinated citations on AI and engineering subjects.

One real pitfall: the model has a "rushing" tendency on vague tasks. It pushes forward with assumptions rather than asking for clarification, occasionally overwriting working logic. We added an explicit clarification step in the prompt for any brief under 100 words. After that change, retry rate dropped from 18% to under 4%.

Computer Use: The Responses API Requirement

This feature has one critical caveat: it is available through the Responses API only, not the Chat Completions endpoint. If your existing stack is built around /v1/chat/completions, you need a migration step before you can access it.

response = client.responses.create(

model="gpt-5.4",

tools=[{"type": "computer_use"}],

input=[{

"role": "user",

"content": "Open the browser, navigate to the admin dashboard, and export the monthly report as CSV."

}],

truncation="auto"

)

The 75% OSWorld-Verified score exceeds human expert performance at 72.4%. But OSWorld is a curated benchmark with standardized desktop setups. In production, your CRM or custom internal tool will behave differently from the benchmark environment. Run computer use against a staging environment, log at least 10 end-to-end executions, and measure cost-per-successful-task before committing to production. For anything that writes or deletes production data, add a human approval step in the loop — this is non-negotiable.

We evaluated computer use for automated browser testing of our Hotel Management Suite, but latency of 8–15 seconds per action step was too high for CI pipeline requirements. For time-sensitive workflows, Playwright scripting with GPT-5.4 as the logic engine is still faster today. Computer use is better suited for infrequent, human-paced tasks like report generation or form filling.

GPT-5.4 Mini vs Full: When to Choose Which

I would recommend gpt-5.4-mini over the full model for any workflow running high volumes of structured extraction, classification, or routine code generation. At approximately $0.40 input / $1.60 output per million tokens, it costs roughly 6× less and scores 54.38% on SWE-bench Pro — more than capable for the majority of production coding tasks.

Use the full model when:

- You need

reasoning_effort="high"or"xhigh"for complex multi-step reasoning - You are using computer use (Mini does not support this feature)

- The task requires nuanced judgment — audit reports, legal analysis, complex debugging across multiple files

Our ContentForge pipeline now uses Mini for outline generation and section templating (high-volume, structured) and the full model only for final content review passes. This cut monthly API spend by roughly 40% compared to running the full model across every stage.

The reasoning.effort Parameter in Practice

This is the most underappreciated addition in GPT-5.4. The five levels let you make explicit cost-quality tradeoffs per request rather than forcing you to choose a different model:

none— No chain-of-thought. Fastest, cheapest. Good for lookups and formatting tasks.low— Light reasoning. Good for extraction, classification, and summarization.medium— Default. Balanced for most tasks.high— Complex debugging, multi-step planning, and code review.xhigh— Reserved for genuinely hard problems. Token cost can be 3–5× that ofmedium.

One practical tip: log usage.completion_tokens_details.reasoning_tokens per request. It is easy to set high during development and carry it into production without noticing. On a high-throughput pipeline processing 500 requests per day, that difference is significant at end-of-month billing.

Migration Path From GPT-5.2

From 11+ years evaluating API migrations across client projects, the pattern that causes the fewest production incidents: swap the model alias in a feature branch → run your existing eval suite → measure token usage and latency delta → promote to production. GPT-5.4 is backward-compatible with Chat Completions; you do not need to refactor your prompt structure unless you specifically want to add reasoning_effort.

Watch for three things during migration:

- Increased output verbosity at

mediumreasoning effort. Some prompts that returned 200 tokens on GPT-5.2 now return 350–500. If you parse structured outputs downstream, test that your JSON extraction still works correctly. - Inconsistent refusal behavior. The 5.4 refusal threshold changed. Add explicit professional context to prompts that touch security, medical, or financial domains.

- Higher latency on long contexts. At 200K+ tokens, median response time is 25–45 seconds at

mediumeffort. Review your timeout settings and any user-facing loading states before the cutover.

My Verdict: GPT-5.4 vs Claude Sonnet 4.6 for Production

Both models are live on different parts of our production stack right now, which puts me in a position to give a concrete opinion rather than a benchmark comparison.

GPT-5.4 wins on: computer use, the Responses API ecosystem, and structured output reliability across complex schemas. It is also the better choice if you are already deep in the OpenAI tooling ecosystem — Assistants, Files, Threads, and the evolving agent primitives.

Claude Sonnet 4.6 still has the edge on: sustained long-form content quality, fewer unprompted refusals on edge-case professional queries, and overall prose texture for content generation. For ContentForge's final draft stage specifically, I have not moved away from Sonnet 4.6.

The tradeoff I have seen across production deployments is not really about which model is "better" — it is about where each one fits in your workflow graph. Build a routing layer: GPT-5.4 for agent loops and computer use, Mini for high-volume extraction, and your best long-context model for synthesis tasks. Optimize the workflow, not the model selection.

If you are starting fresh today and the budget allows, start with GPT-5.4 for your agentic workloads. The computer use feature alone will open use cases that simply were not practical before March 2026. Just account for the 272K surcharge in your cost model from day one — that is the number that surprises most teams mid-sprint.

Enjoyed this article?

Get more AI insights — browse our full library of 64+ articles and 373+ ready-to-use AI prompts.